Thinking About Including AI in your Leadership Strategy Plan?

Chat GPT has been a part of almost every leadership development conversation I’ve had in the last couple of weeks. How is AI impacting our culture, our education, our very identity?

I’ve been discussing this for a while. See this article in Leadership Now: How to Be an Engaging Leader in a World of Robotics, AI, and Digitization.

With the rise of Artificial Intelligence and ChatGPT, we have concerns mounting on both micro and macro levels. This TedX by a colleague of mine lays out a framework to understand what we’re dealing with: Searching for a Theoretical for the World Now Emerging.

I thought this viewpoint interesting to the ongoing discussion.

What are your thoughts? What are you seeing?

Here are the Ethical Questions We Should be Asking About AI

Publication: The Bismarck Tribune

By: Richard Kyte

Date: March 1st, 2023

You can read the article here

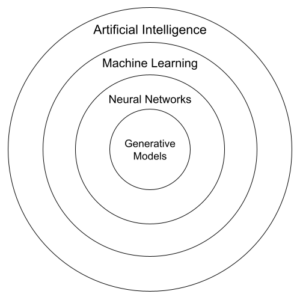

Our society has experienced plenty of disruptive technology over the past couple of centuries, but nothing has been as disruptive as artificial intelligence promises to be.

Ever since ChatGPT was released to the public in November, people have been scrambling to figure out what the social and economic ramifications will be. The potential benefits and harms are so varied, so significant and so speculative, that one can find opinions ranging all the way from doomsday scenarios to ushering in a future filled with vast improvements in every area of human life.

Several of these concerns were highlighted recently when advance testers with access to Microsoft’s Bing AI chatbot reported alarming conversations. Since those results have been so widely reported, I won’t describe them here. Let’s just say they were creepy. Microsoft’s response was to pull the chatbot from public use and send it back for further development.

But what happens next? What if companies such as Google, Microsoft and Amazon are able successfully to address the chief ethical concerns with AI? What if a version of AI is able to integrate seamlessly into our personal and professional lives, making it easier to find and use information, to process that information into useful documents, laws, policies, research papers, letters, designs, contracts, artworks, and who knows what else? And what if we are able to do that without manipulating, deceiving, exploiting, or otherwise harming anybody? Will that be good for humanity?

Consider the stated aim of the company that developed ChatGPT: “OpenAI’s mission is to ensure that artificial general intelligence (AGI) — by which we mean highly autonomous systems that outperform humans at most economically valuable work — benefits all of humanity.”

Imagine all the things that imply, all the ways in which human beings put words and images together to communicate with one another. And then imagine that much of that is done by some kind of entity that has no self-awareness, no personhood, but is capable of precisely imitating the full range of expressive activities that, up to this point in the world’s existence, have been the exclusive province of human beings.

Imagine that in the future an AI bot will be capable of writing letters to a friend, composing a note of condolence to comfort someone in sorrow, writing a speech to motivate people to support a cause, creating a sermon to be preached on Sunday, vows to be spoken at one’s wedding or a prayer to be said at a parent’s funeral.

Don’t suppose that people will not use AI for such things. Of course, they will. Once AI is advanced enough to simulate the subtleties of a wide range of human expression, it will certainly be called upon to help people on those occasions when expression is difficult, when it is most important to find just the right words for the occasion.

We had a glimpse of such a use recently when an administrator at Vanderbilt University used ChatGPT to compose an email to students in response to the mass shooting at Michigan State. The email called for promoting a “culture of respect and understanding” and urged members of the community to “continue to engage in conversations.” It closed with a plea to “come together as a community to reaffirm our commitment to caring for one another.”

Vanderbilt students were predictably outraged. We want to know that the words spoken to us, especially words used to move us — to comfort, console or encourage us — are sincere. We want assurance that what someone says to us is what they really mean.

The truth is, human beings have always wrestled with the question of whose words to trust. That is especially evident today when so many of our social relations are tainted by a deep and pervasive skepticism.

The assurance of sincerity, which is essential to so much we take for granted in our society, is acquired incrementally, through a

Will AI help us do that? Will AI help us find our way into deeper, more meaningful relationships with others? Will it help us to love our neighbors more genuinely? Or will it drive the wedge of skepticism deeper into the heart of our relationships, causing us to doubt the sincerity of all our interactions and become even more distrustful, suspicious, and lonely?

People working in technology fields tend to restrict what they call “ethics” to problems of implementation. They don’t ask whether the technology is inherently unethical. How will it shape our understanding of our lives together? Does the best, most well-intentioned and effective use pose the greatest threat?

Those are the questions I wish more people would ask.

No Comments